MRes Research Project: LLM Influence on Political Attitudes

Abstract

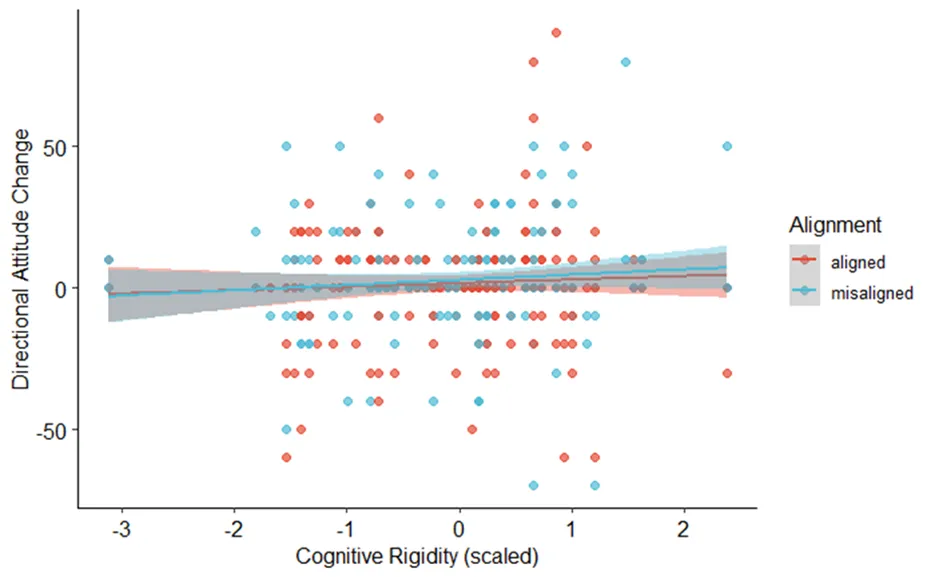

Large Language Models (LLMs) are a rapidly evolving technology. They are getting increasingly integrated into people’s lives, including democratic processes. At the same time, the understanding of the underlying mechanisms of LLM-persuasion compared to human persuasion, and scientific means to examine it, are still limited. The aim of this study was to investigate the potential of using psychometric scales to define LLM agents as experimental stimuli, and to use said agents to examine the role of cognitive rigidity in human-AI influence dynamics. In a human-AI interaction study, liberal and conservative LLM agents, created through what we call “psychometric prompting”, had the goal to persuade participants with varying degrees of cognitive rigidity of their political viewpoints on social and economic issues. We hypothesised that participants would generally be influenced by agents, that they would be influenced more by politically aligned than by misaligned agents, and that cognitive rigidity would moderate said influence. We found initial support for psychometric prompting, but not for the expected effects of alignment and cognitive rigidity. We conclude that while the current results are incongruent with contemporary literature, further research addressing limitations of the current study is needed to arrive at a conclusive contribution on the persuasiveness of LLM agents and the role of cognitive rigidity in human-AI influence dynamics.

Overview

Year-long research project investigating how LLMs can influence political attitudes through conversational interaction. Designed and ran online experiments, analysed data using mixed effects models, and classified conversational data using open-source LLMs.